CAN XL has recently emerged as a contender in the 10 Mbit/S in-vehicle network space, along with 10Base-T1S Automotive Ethernet. What does CAN XL bring to earn its place on the vehicle bus?

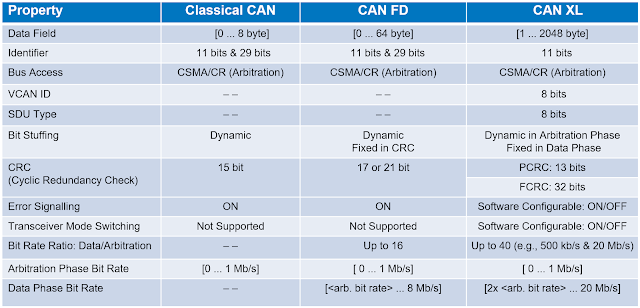

CAN XL builds upon the foundation of CAN and CAN FD, both protocols with a long history in the automotive industry. Figure 1 summarizes the characteristics of the three CAN variants.

|

| Figure 1. A comparison of the characteristics of CAN, CAN FD and CAN XL. |

CAN XL increases throughput with a Fast Mode bit rate of 20 Mbit/S in the data phase, while it operates at 1 Mbps in the arbitration phase (those fields other than data). Another feature contributing to the improved bandwidth of CAN XL is the increased data field maximum length of 2048 bytes compared to 64 bytes for CAN FD and 8 bytes for classic CAN.