|

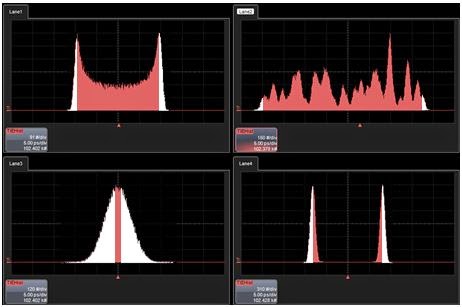

| Figure 1: An example of using histograms to plot the statistical distribution of edge arrival times |

A simple, yet telling, application of statistical analysis to the characterization of jitter would be to use histograms to compile a statistical distribution of edge arrival times (Figure 1). However, if we were to look at four different signals, we would see four different distributions. But those distributions all have at least one thing in common. If you look at the outside edges of each of the histograms, they all exhibit a similar shape. There's a "falling-off" shape to those edges that's half-Gaussian, or random, in character.

|

| Figure 2: Jitter has two main components: random (in white in all four histograms) and deterministic (in salmon) |

The other component, seen in a salmon hue, is the deterministic component of jitter. This component determines the shape of the distribution between the two tails. Deterministic jitter is bounded, so assuming a sufficient sample size, that part of the distribution will not grow as you measure longer and longer.

|

| Figure 3: Measuring peak-to-peak jitter is a losing proposition |

Well, then, what about measuring the standard deviation of jitter? Nope, that's not a very good idea either. The standard deviation of the distribution isn't going to be very meaningful if the distribution isn't Gaussian. You might see distributions with different shapes that have the same standard deviation. Thus, as a single figure of merit, standard deviation doesn't tell you very much.

So at the end of the day, it comes back to bit errors and the propensity of our system to generate them. If we look at enough bits, we are statistically guaranteed to see a bit error. By the late 1990s, what mattered was how many bit errors we expect to see for a given number of bits—the bit-error ratio (BER). If we get one bit error in one Mbit of data, that's a BER of 10-6. The typical confidence level required by many serial-data standards is a BER of 10-12. That's one bit error in 1000 Gbits of data, and that's a number that you hear a lot in conjunction with compliance testing.

The late 1990s saw a shift in emphasis to BER as a function of jitter. We'll turn our attention to that topic in our next installment in this informal survey of the history of jitter.

No comments:

Post a Comment